If you’re building a data center, here are three things to keep in mind if you want to keep up with the digital shift.

The COVID pandemic has made a drastic impact on society, not only on our health but if we look at the industrial aspects, it has pushed the ‘digital’ revolution forward, from how we have business meetings to the way we learn to operate factories. This sudden digital shift, together with the latest developments in 5G communication is stimulating needs for more cloud-based and online solutions, which in-turn is motivating huge investments in IT infrastructure such as data centers. In this article, Hiroki Nishiyama, global manager of data center marketing at Mitsubishi Electric Corporation, explains further about the challenges facing this important rising industry.

“The recent changes triggered by the digital shift and how we communicate, are driving huge investments in data centers. In fact the global data center market is expected to increase by around 8% over the next ten years compared to 2020,” comments Hiroki Nishiyama. “If we only focus on data center infrastructure management (DCIM), then the growth rate is even higher at 17.5%.” These figures show that data center investments will continue to grow, which means more data centers will be built globally, with more “digitally” managed operation systems being installed.

One of the questions that needs to be considered is “what is required in a data center?” Hardware like IT servers are both obvious and essential, but that is not enough to keep data centers running. There are three principal points that should be considered when building data centers: sustainability, efficiency, and redundancy.

Increasing energy efficiency for cooling IT servers

Energy efficiency is a key aspect of running a data center as they naturally consume a great amount of electricity. From powering the essential IT servers 24-7, to providing backup systems in the form of uninterrupted power supplies (UPS) and last but not least, to cooling the facility. What is often overlooked by the layperson is that IT servers produce heat. In-fact it can be a surprising amount of heat, which of course is also wasted energy, but if left unchecked, will over time contribute to a gradual degradation of the electrical components, hastening the failure of the all-important IT servers. This is why cooling is important, not only to be executed in the most energy efficient way so as not to add to the waste, but also to keep an optimum environment to maintain the performance of the servers for as long as possible.

Nishiyama adds more background, “To illustrate how much heat is generated, let’s assume a server room contained 50 servers. Together they would generate around 17-18 kilowatts of heat per hour. Imagine that’s like having 15 average heaters or a small furnace running continuously. But that’s not all, there are also additional thermal effects from the UPS, routers and switches, lighting as well as exposure from any windows which may be present. So overall a lot of heat is being generated and potentially stored.” Nishiyama further notes, “Actually, on average electronics devices like servers are running at 30-40 degrees Celsius, and the common wisdom is to cool the data center to around 18-25 degrees Celsius, which is a lot of continuous cooling. So, all this heat ironically needs more power consumption to generate cooling. And that means electricity bills are just getting bigger by the day, and that is why energy efficiency is one of the highest priorities.”

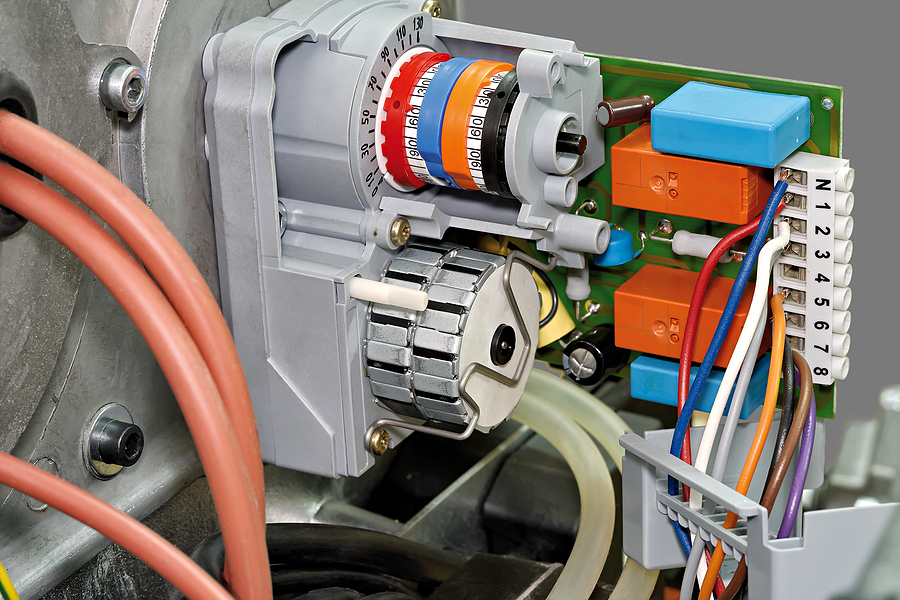

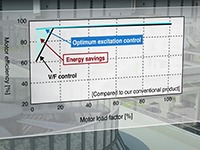

There are lots of imaginative ways to reduce the heat in data centers, from pioneering work to run data centers in the sea, to taking more immediate steps of turning lights off, using LEDs where necessary, reducing windows, and improving the energy efficiency of air handling and conditioning through the use of inverters on motors that drive pumps, compressors and fans. “Let’s consider an air handling system. Power consumption can be reduced by adjusting the amount of airflow by controlling the motor with a frequency inverter,” explains Nishiyama.

“In fact, you often don’t need to be an inverter specialist to set the special parameters according to the application or load as many devices can automatically streamline motor control with auto-tuning features.”

More often newer cooling technologies such as cooling towers, air handling units for example have inverters fitted as standard, but if not, they can be relatively easy to retro-fit and can quickly contribute to minimizing power consumption, leading to cost efficiency and sustainability.

If cooling is so important, then another concern might come to mind: “What would happen if the device cooling system fails after some years?” Especially in the case of data centers, equipment failure might become critical for continuous operation, especially for data centers that might be serving financial transactions.

“There are inverters that support built-in preventive maintenance features, so this might be a solution,” suggests Nishiyama. If load characteristics are monitored in real-time, degrading performance could be detected at an early stage. Such preventive maintenance features enable operators to notice abnormalities such as filter clogging or effects from worn bearings, making maintenance easier to schedule in time and help reduce downtime.

Visualizing the status of the data center to streamline operations

Considering the issue of maintenance, visualizing the status of the facilities can also help keep the data center running continuously and reduce the workload of maintenance engineers through clear and effective communication of the current status.

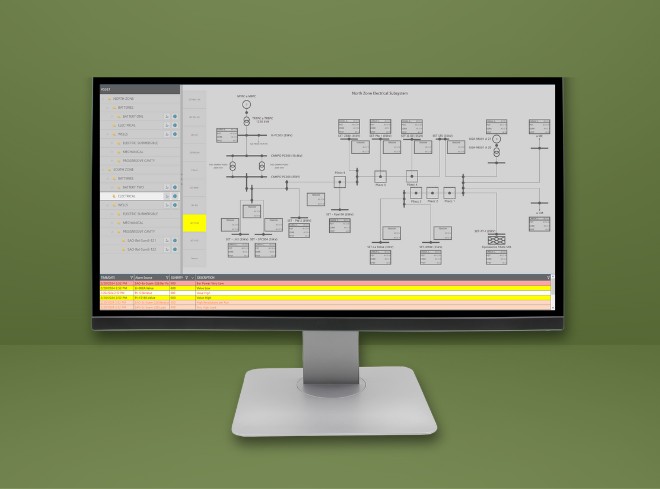

“Advanced visualization can help monitor multiple subsystems, for example electricity and lighting to air conditioning and even disaster prevention systems,” Nishiyama explains. “A flexible SCADA system can help support expanding operations through to the implementation of redundant monitoring systems as the need arises.”

Nishiyama adds, “With a SCADA system, such as GENESIS64 for example, the real-time status of the data center can be monitored through graphical displays of the data from various subsystems. Alarms can even be sent via email when something goes wrong. And in the case of an equipment fault, the SCADA monitoring system can be configured to list-up the potential causes in order of probability to make troubleshooting tasks easier.”

By further utilizing cloud services in combination with SCADA systems, larger volumes of data can be processed, potentially achieving integrated monitoring of multiple data centers. That becomes more important as the number of data centers being built around the world, from hyper-scale to micro data centers, is increasing. The use of Geo- SCADA systems will enable remote monitoring from a variety of terminals such as PCs, mobile devices, and smart glasses to help facilitate the monitoring and maintenance of these locations.

Reducing risks through redundant systems

One of the other priorities for data centers is to keep the facility running, no matter what happens. Redundancy is therefore a very important focus, which not only applies to the servers themselves, but also to the various operational systems such as power supplies, cooling or security systems since they are critical factors in maintaining the data center environment.

“The precise control and integration of HVAC systems to maintain the humidity, temperature and other air-quality factors that affect the data center environment, is often ‘managed’ by programmable controllers (PLCs) due to their rugged industrial specifications,” Nishiyama explains. “To reduce risks, data centers typically install redundant cooling systems where a duplicate, standby PLC may be installed in parallel to the main PLC. And in case something goes wrong with the power source or even in emergency situations like fire in the equipment room, the control will be switched instantaneously from the main PLC to the standby PLC.”

Redundant PLC systems not only make it possible to build a highly flexible air conditioning system, but can also be used to ensure the reliability of other critical equipment, such as chillers, that cannot be shut down during data center operations. “Traditionally such redundant PLCs could be very costly since they were built of many dedicated parts which in turn added to the maintenance burden due to their specialist nature. However, more recently, high reliability PLC systems, such as Mitsubishi Electric’s MELSEC iQ-R offer a hybrid solution where a single specialist redundant switching module can be added to a standard industrial PLC. This reduces the overall system cost and any impact on maintenance, but with no compromise on redundancy due to high quality and ruggedness of today’s PLC components,” adds Nishiyama.

For redundant PLC systems, it is also essential that the main and standby PLCs are separated physically, meaning a different power source and installation location to maximize the “protection”. In such circumstances the main and standby PLCs should be linked by an optical fiber tracking cable to avoid problems from electrical noise, but also to have a reasonable distance apart and to reduce switching time between the two PLCs in case of emergencies.

“Using redundant PLCs really is a ‘goldilocks’ issue. You should be careful when planning the location of the two PLCs that they are not too far apart, but equally they should not be too close together either and definitely not placed in the same cabinet, or then it would defeat the purpose of the redundant system,” Nishiyama emphasized. “It’s important to target a high-speed system with a switching time of around 10 milliseconds or less to enable continuous control with high reliability.”

So, how do we build a reliable data center system?

In this era where the demand for digital services is increasing, the pressure is also rising on providers to prevent downtimes of such services even for a short time, which makes the data centers availability a critical factor. In fact, today, if one of the big “cloud” providers has an outage, even for just 30 minutes, it makes headline news around the world.

“Since the demand for digital services is increasing and data centers are being built to meet that demand, it is important to remember it’s not just about the IT, but also how to manage your systems and make them redundant, efficient, and sustainable,” Nishiyama explains. Taking small, practical steps, leveraging known technologies such as using inverters for increased energy efficiency in cooling systems, visualizing the overall status of the data center to streamline operations, or above all, reducing risks through redundant systems are all achievable for minimum cost but with maximum returns. Nishiyama concludes, “Working with partners that have both know-how, experience and above all an eco-system of products, solutions and partners behind them is a great way to mitigate risks and manage costs in the long run.”

Work with Us and Succeed

We love our customers and the challenges they bring to us. We also like to let our customers shine by discussing how we worked together to solve their biggest challenges. If you have a challenge that needs to be solved and would like to be our next BIG success story, reach out to us and let’s connect!